The Weird World of AI Hallucinations

When someone sees something that isn't there,关键字1 people often refer to the experience as a hallucination. Hallucinations occur when your sensory perception does not correspond to external stimuli. Technologies that rely on artificial intelligence can have hallucinations, too.

When an algorithmic system generates information that seems plausiblebut is actually inaccurate or misleading, computer scientists call it an AI hallucination.

Editor's Note:

Guest authors Anna Choi and Katelyn Xiaoying Mei are Information Science PhD students. Anna's work relates to the intersection between AI ethics and speech recognition. Katelyn's research work relates to psychology and Human-AI interaction. This article is republished from The Conversation under a Creative Commons license.

Researchers and users alike have found these behaviors in different types of AI systems, from chatbots such as ChatGPT to image generators such as Dall-E to autonomous vehicles. We are information science researchers who have studied hallucinations in AI speech recognition systems.

Wherever AI systems are used in daily life, their hallucinations can pose risks. Some may be minor – when a chatbot gives the wrong answer to a simple question, the user may end up ill-informed.

But in other cases, the stakes are much higher.

At this early stage of AI development, the issue isn't just with the machine's responses – it's also with how people tend to accept them as factual simply because they sound believable and plausible, even when they're not.

We've already seen cases in courtrooms, where AI software is used to make sentencing decisions to health insurance companies that use algorithms to determine a patient's eligibility for coverage, AI hallucinations can have life-altering consequences. They can even be life-threatening: autonomous vehicles use AI to detect obstacles: other vehicles and pedestrians.

Making it up

Hallucinations and their effects depend on the type of AI system. With large language models, hallucinations are pieces of information that sound convincing but are incorrect, made up or irrelevant.

A chatbot might create a reference to a scientific article that doesn't exist or provide a historical fact that is simply wrong, yet make it sound believable.

In a 2023 court case, for example, a New York attorney submitted a legal brief that he had written with the help of ChatGPT. A discerning judge later noticed that the brief cited a case that ChatGPT had made up. This could lead to different outcomes in courtrooms if humans were not able to detect the hallucinated piece of information.

With AI tools that can recognize objects in images, hallucinations occur when the AI generates captions that are not faithful to the provided image.

Imagine asking a system to list objects in an image that only includes a woman from the chest up talking on a phone and receiving a response that says a woman talking on a phone while sitting on a bench. This inaccurate information could lead to different consequences in contexts where accuracy is critical.

What causes hallucinations

Engineers build AI systems by gathering massive amounts of data and feeding it into a computational system that detects patterns in the data. The system develops methods for responding to questions or performing tasks based on those patterns.

Supply an AI system with 1,000 photos of different breeds of dogs, labeled accordingly, and the system will soon learn to detect the difference between a poodle and a golden retriever. But feed it a photo of a blueberry muffin and, as machine learning researchers have shown, it may tell you that the muffin is a chihuahua.

When a system doesn't understand the question or the information that it is presented with, it may hallucinate. Hallucinations often occur when the model fills in gaps based on similar contexts from its training data, or when it is built using biased or incomplete training data. This leads to incorrect guesses, as in the case of the mislabeled blueberry muffin.

It's important to distinguish between AI hallucinations and intentionally creative AI outputs. When an AI system is asked to be creative – like when writing a story or generating artistic images – its novel outputs are expected and desired.

Hallucinations, on the other hand, occur when an AI system is asked to provide factual information or perform specific tasks but instead generates incorrect or misleading content while presenting it as accurate.

The key difference lies in the context and purpose: Creativity is appropriate for artistic tasks, while hallucinations are problematic when accuracy and reliability are required. To address these issues, companies have suggested using high-quality training data and limiting AI responses to follow certain guidelines. Nevertheless, these issues may persist in popular AI tools.

What's at risk

The impact of an output such as calling a blueberry muffin a chihuahua may seem trivial, but consider the different kinds of technologies that use image recognition systems: an autonomous vehicle that fails to identify objects could lead to a fatal traffic accident. An autonomous military drone that misidentifies a target could put civilians' lives in danger.

For AI tools that provide automatic speech recognition, hallucinations are AI transcriptions that include words or phrases that were never actually spoken. This is more likely to occur in noisy environments, where an AI system may end up adding new or irrelevant words in an attempt to decipher background noise such as a passing truck or a crying infant.

As these systems become more regularly integrated into health care, social service and legal settings, hallucinations in automatic speech recognition could lead to inaccurate clinical or legal outcomes that harm patients, criminal defendants or families in need of social support.

Check AI's Work – Don't Trust – Verify AI

Regardless of AI companies' efforts to mitigate hallucinations, users should stay vigilant and question AI outputs, especially when they are used in contexts that require precision and accuracy.

Double-checking AI-generated information with trusted sources, consulting experts when necessary, and recognizing the limitations of these tools are essential steps for minimizing their risks.

(责任编辑:休闲)

-

本次赛事国际范儿十足,赛事精心设置大学生男子组、大学生女子组、公开组、团体接力组等5个组别,吸引意大利、新加坡、法国、马达加斯加等境外13个国家和地区的30名运动员报名参赛。比赛所用赛道依托四川省山地

...[详细]

本次赛事国际范儿十足,赛事精心设置大学生男子组、大学生女子组、公开组、团体接力组等5个组别,吸引意大利、新加坡、法国、马达加斯加等境外13个国家和地区的30名运动员报名参赛。比赛所用赛道依托四川省山地

...[详细]

-

记者从南京海关了解到,今年前7个月,长江经济带11省市外贸进出口值达11.23万亿元,创历史同期新高,占全国进出口总值的45.2%。其中,江苏省外贸进出口3.15万亿元,增长8.1%,地区外贸占长江经

...[详细]

记者从南京海关了解到,今年前7个月,长江经济带11省市外贸进出口值达11.23万亿元,创历史同期新高,占全国进出口总值的45.2%。其中,江苏省外贸进出口3.15万亿元,增长8.1%,地区外贸占长江经

...[详细]

-

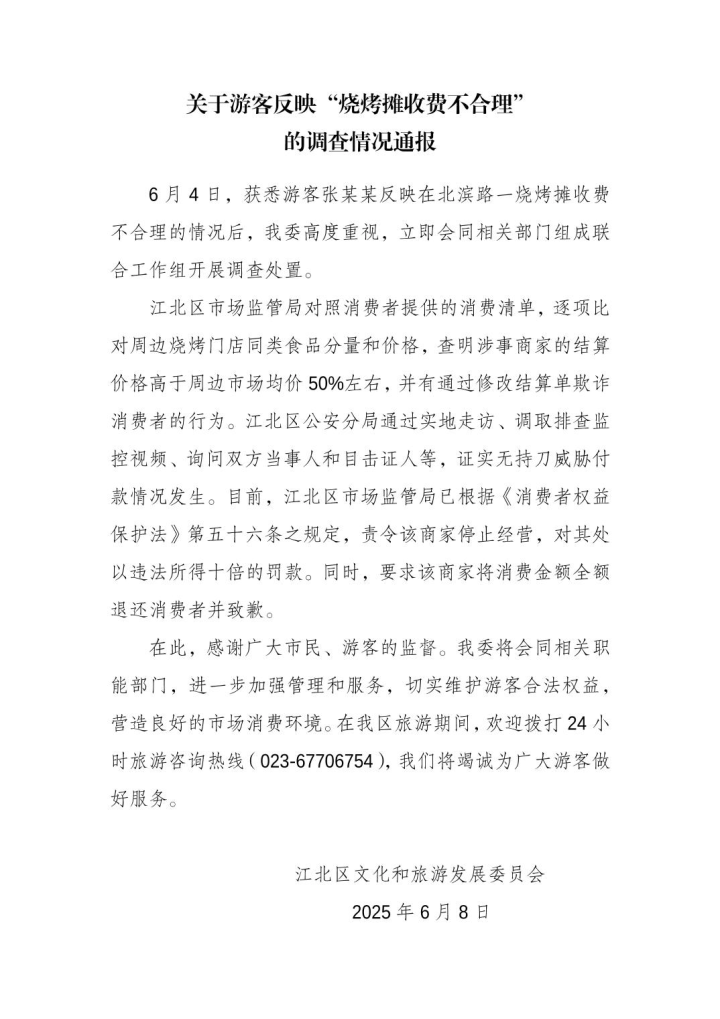

游客3人吃烧烤花780元?重庆通报:存在欺诈消费者行为,无持刀威胁情况

近日,一名上海游客发视频称,三人在重庆吃烧烤消费780元,实际签子数量比账单少了三分之一,还遭遇“阴阳价格”,引发网络热议。据重庆江北区融媒体中心消息,6月8日,江北区文化和旅游发展委员会发布关于游客

...[详细]

近日,一名上海游客发视频称,三人在重庆吃烧烤消费780元,实际签子数量比账单少了三分之一,还遭遇“阴阳价格”,引发网络热议。据重庆江北区融媒体中心消息,6月8日,江北区文化和旅游发展委员会发布关于游客

...[详细]

-

进入伏天,我省多地陆续开启高温模式。日前,省委社会工作部会同省卫生健康委、省总工会、团省委,部署开展“夏日送清凉”主题志愿服务活动,积极营造关心关爱户外工作者的良好社会氛围,提高户外工作者安全健康意识 ...[详细]

-

《inZOI(云族裔)》正式上线官方模组工具“inZOI ModKit”

6月13日,KRAFTON旗下的生活模拟游戏《inZOI(云族裔)》进行游戏更新,正式推出官方模组工具“inZOI ModKit”,并计划在6月—8月展开两次全球模组征集活动。“inZOI ModKi

...[详细]

6月13日,KRAFTON旗下的生活模拟游戏《inZOI(云族裔)》进行游戏更新,正式推出官方模组工具“inZOI ModKit”,并计划在6月—8月展开两次全球模组征集活动。“inZOI ModKi

...[详细]

-

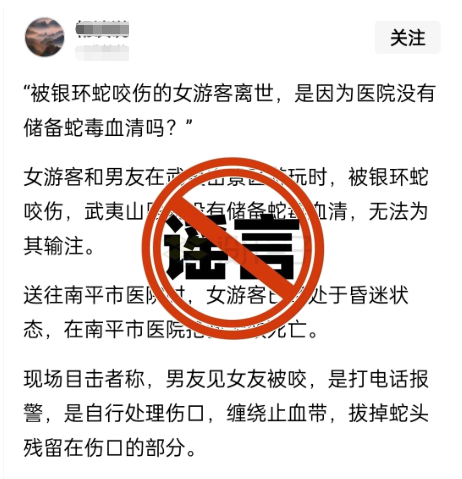

一女游客在武夷山被银环蛇咬伤致死?官方:与事实严重不符|破谣局

据武夷山市委网信办消息,近日,工作人员在日常网络巡查过程中发现,一网民在某网络平台发布信息称“一女游客和男友在武夷山景区游玩时,被银环蛇咬伤,武夷山医院没有储备蛇毒血清,无法为其输注。送往南平市医院时

...[详细]

据武夷山市委网信办消息,近日,工作人员在日常网络巡查过程中发现,一网民在某网络平台发布信息称“一女游客和男友在武夷山景区游玩时,被银环蛇咬伤,武夷山医院没有储备蛇毒血清,无法为其输注。送往南平市医院时

...[详细]

-

近日,《消逝的光芒》工作室Techland发布新作《消逝的光芒:困兽》的最新消息,并官宣将于2025年8月22日发售。随着发售日期的公布,一段长达30分钟的实机游玩视频也接踵而至。实机演示视频:在视频

...[详细]

近日,《消逝的光芒》工作室Techland发布新作《消逝的光芒:困兽》的最新消息,并官宣将于2025年8月22日发售。随着发售日期的公布,一段长达30分钟的实机游玩视频也接踵而至。实机演示视频:在视频

...[详细]

-

彪马独家推出HYROX联名系列, 助阵HYROX健身跑芝加哥世锦赛

德国黑措根奥拉赫, 2025年6月9日2025年HYROX健身跑芝加哥世界锦标赛开赛在即,全球知名运动品牌PUMA今日正式发布备受期待的第二季PUMA x HYROX联名系列运动服饰与运动鞋款。该系列

...[详细]

德国黑措根奥拉赫, 2025年6月9日2025年HYROX健身跑芝加哥世界锦标赛开赛在即,全球知名运动品牌PUMA今日正式发布备受期待的第二季PUMA x HYROX联名系列运动服饰与运动鞋款。该系列

...[详细]

-

五月天《回到那一天》25周年巡回演唱会杭州奥体5场火热开唱 创下演出场地新纪录

五月天24、25、27、28日在杭州奥体中心体育场,《回到那一天》25周年巡回演唱会继续与你相遇;6月13、14、15日举办哈尔滨站・冰城环场版;接着回到25年最初的起点——台

...[详细]

五月天24、25、27、28日在杭州奥体中心体育场,《回到那一天》25周年巡回演唱会继续与你相遇;6月13、14、15日举办哈尔滨站・冰城环场版;接着回到25年最初的起点——台

...[详细]

-

现在喜爱传奇SF的玩家数目依旧多不堪数,固然这款游戏推出的时光已经比拟长了,但经常会更新,也会出一些新地图与BOSS,弄法丰硕加上游戏打设备比拟赚金币,所以依旧吸引了很多新玩家参加。建议新人们玩之前懂

...[详细]

现在喜爱传奇SF的玩家数目依旧多不堪数,固然这款游戏推出的时光已经比拟长了,但经常会更新,也会出一些新地图与BOSS,弄法丰硕加上游戏打设备比拟赚金币,所以依旧吸引了很多新玩家参加。建议新人们玩之前懂

...[详细]

万能沙雕评论美女语录 适合用来夸美女的语录

万能沙雕评论美女语录 适合用来夸美女的语录 网易决定送每位玩家一颗星星,《星绘友晴天》今日首曝!

网易决定送每位玩家一颗星星,《星绘友晴天》今日首曝! 论组队打霸王教主的技能

论组队打霸王教主的技能